EP2: The Bridge to Truth: Why Identification Comes Before Estimation

In the first episode of causal inference from the ground up series, we talked about the 3 core trios: estimand (quantity of interest), estimate (a concrete number), and an estimator (an algorithm). Estimation process is essentially a process moving from an estimand to an estimate by using an estimator. Causal inference shares estimation with traditional statistics and machine learning. However, estimation alone is not sufficient for causal inference (Imbens 2020). A critical, though often neglected, step in causal inference is identification.

Listen to This Episode

Want to dive deeper into identification? This podcast episode provides in-depth information and intuition about the concept. So tune in!

1. The Central Crisis: The Infinite Data Trap

Causal effect identification answers a fundamental question:

Can the causal question we care about be answered using the data we have (together with a set of assumptions)?

Importantly, the type of data we observe — or could realistically collect — determines which identification strategies are even possible.

Identification of causal effects is what distinguishes causal inference from predictive modeling. If a causal effect is not identifiable, an infinite amount of data will not solve the problem (Neal 2020, secs. 1.4, 2.4).

A model trained on petabytes of user data can have 99% validation accuracy but still yield a causal estimate that is “confidently wrong”. Without identification, we are not just producing unreliable results; we are potentially generating misinformation masquerading as truth.

This does not mean we should avoid causal analysis whenever there is violation of assumptions. Rather, it highlights why it is crucial to be explicit about assumptions and to conduct sensitivity analyses that assess how potential violations of those assumptions could affect our conclusions.

2. Identification: Bridging the Gap

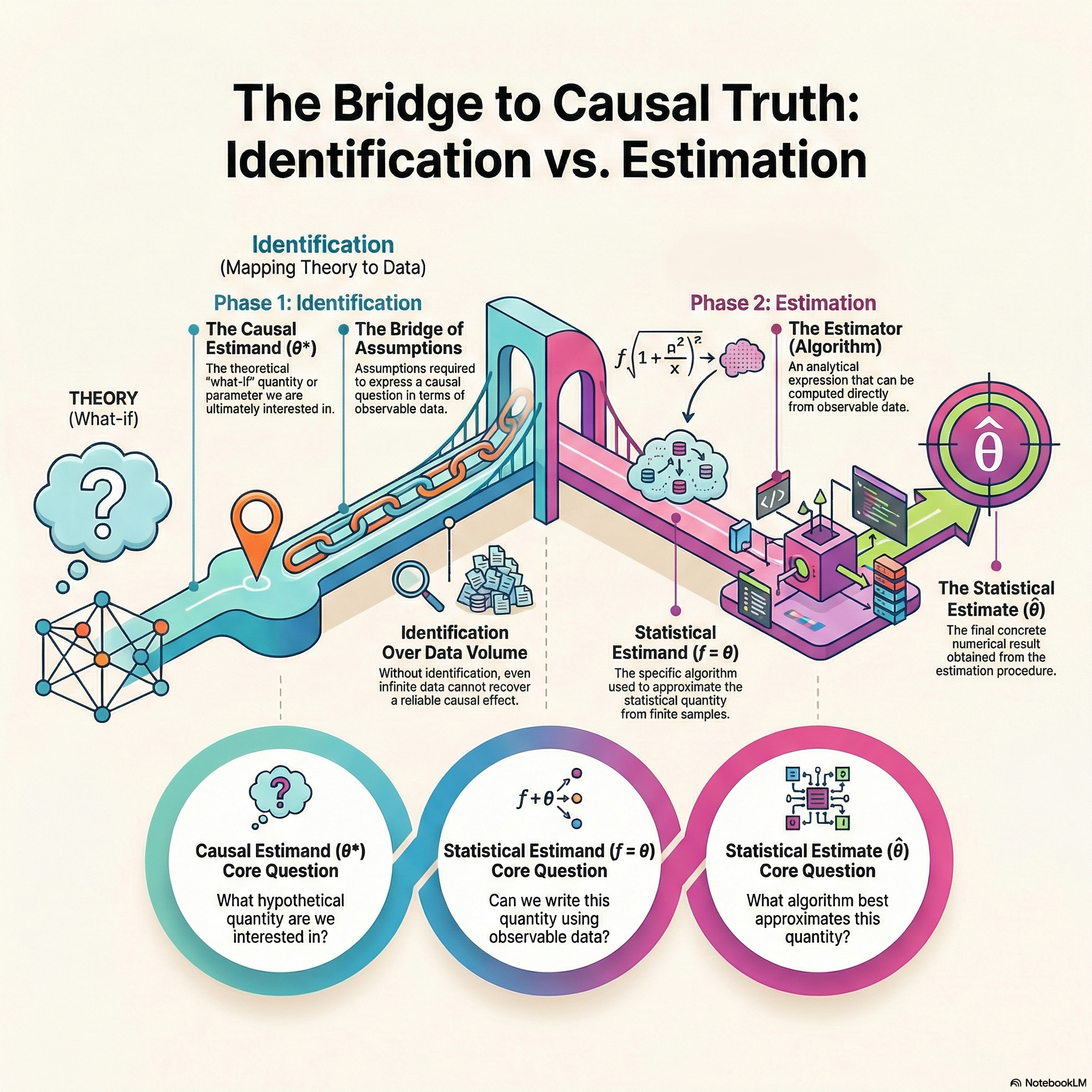

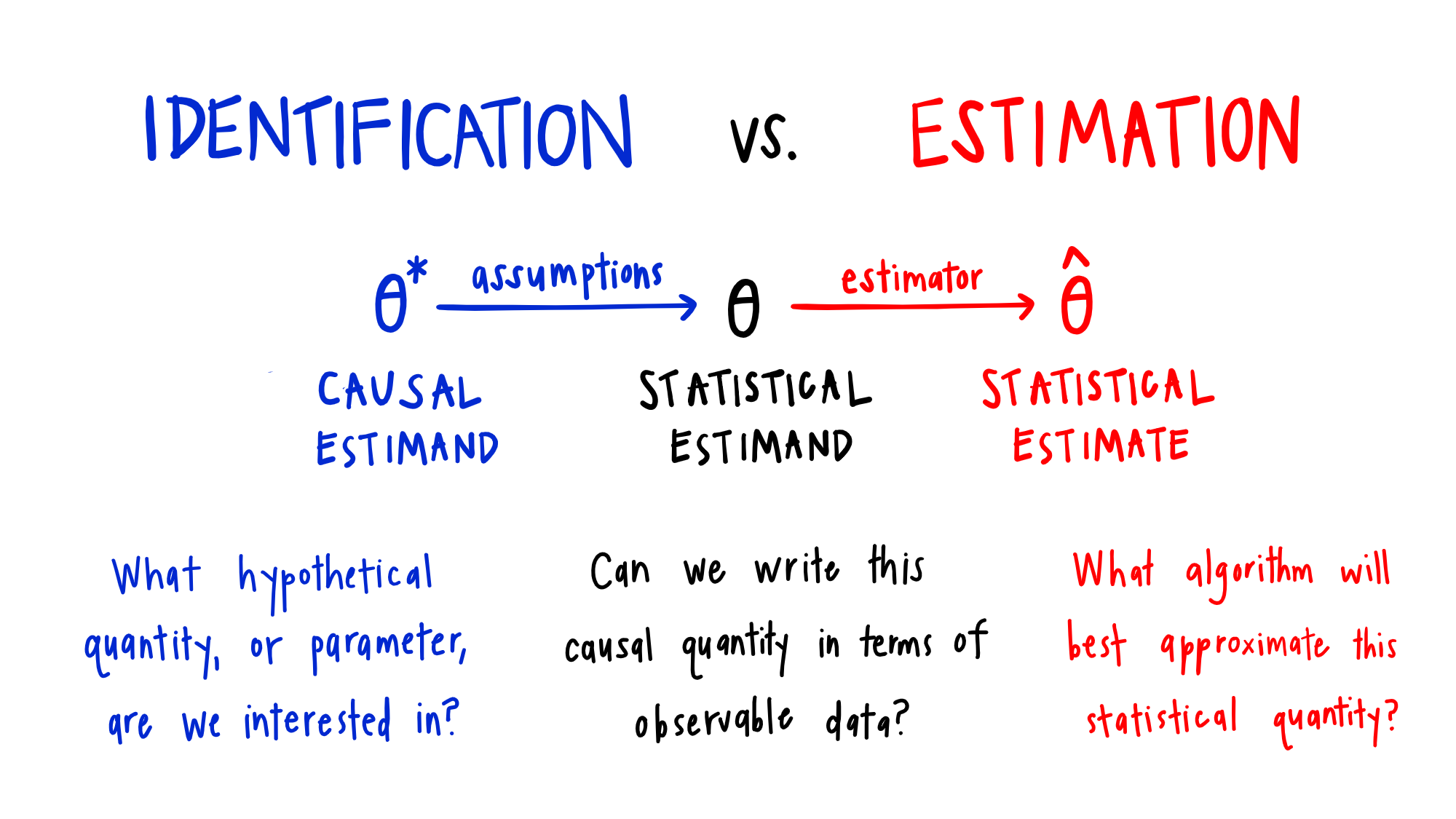

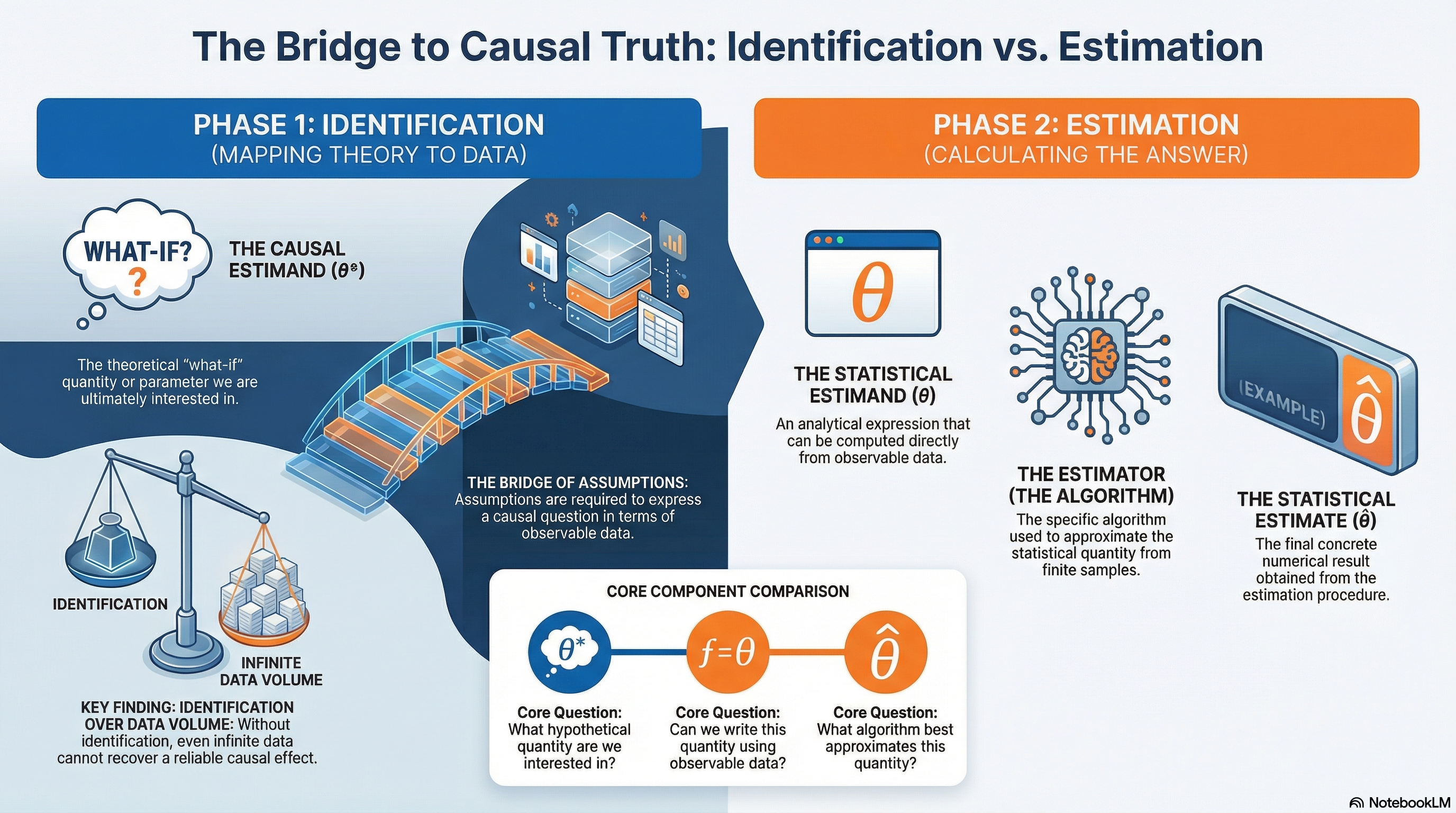

Let’s consider the illustration of causal estimation taken from K. Hoffman’s website. Accordingly:

- the causal estimand is the causal quantity we are ultimately interested in,

- the statistical estimand is an analytical expression of a quantity that can be computed from observable data,

- the statistical estimate is the numerical result we obtain from a specific estimation procedure.

When we say a causal effect is identifiable, we mean that a causal estimand (defined using counterfactual outcomes) can be expressed as a statistical estimand that depends only on observable data.

The arrow labeled ‘Assumptions’ is the only connection between what we want (the causal effect) and what we have (the observed data). It defines the specific conditions under which we can trust that our data is telling us the truth about the causal relationship, rather than just showing us a correlation.

Later, we move to the estimation process and use estimators to approximate the statistical estimand from finite samples. As illustrated in Figure 1, determining whether a causal estimand is identifiable requires evaluating a set of assumptions that link the causal question to the data we observe.

The following figure summarizes the relationship between identification and estimation:

3. E-commerce Example

Let’s consider an example from an e-commerce setting. Suppose we are interested in the question:

Does receiving a promotional email increase the probability that a user makes a purchase?

This is a causal question. We are not merely asking whether users who receive emails purchase more, but whether sending the email causes an increase in purchasing.

Assume we have observational data on 2 million users, including whether they received the promotional email and whether they made a purchase in the following week.

Now suppose that a user’s prior engagement level (for example, how frequently they browse the website) affects both:

- whether they are targeted to receive the email, and

- how likely they are to make a purchase.

If we do not have data or forgot to include the data on prior engagement before the email was sent, the causal effect of the email is not identifiable, because we cannot separate the effect of the email from the effect of users’ underlying engagement.

Even if we were able to collect data on this feature, identification would still require additional assumptions.

For example, another important assumption is the no interference assumption, meaning that one user’s outcome depends only on their own treatment and not on other users’ treatment. If users influence each other’s purchasing behavior (for example, through referrals, shared accounts, or word of mouth), this assumption would be violated, and identification would again fail.

4. Practical Implications

In practice, we can rarely guarantee that all identification assumptions are perfectly satisfied. However, it is important to:

- deliberately evaluate these assumptions,

- consider which assumptions are most critical,

- make explicit trade-offs,

- design analyses that are more robust to assumption violations, and

- conduct sensitivity analyses to assess the potential impact of unobserved confounders.

We will cover specific identification strategies and the assumptions they require and how we conduct sensitivity analysis to ensure robustness in future posts.

In the world of causal inference, identification serves as the critical “bridge of assumptions” that determines whether a theoretical “what-if” question can actually be answered using observable data.

Unlike predictive modeling where more data generally improves performance, causal analysis faces an “Infinite Data Trap”: if an effect is not identifiable, even petabytes of data will produce results that are “confidently wrong”.

To avoid this, we must separate our workflow into two distinct phases — first mapping theory to data by transforming a causal estimand into a statistical estimand via explicit assumptions (like “no interference”), and only then moving to estimation to calculate a numerical result. Ultimately, identifying a causal effect is what distinguishes true scientific insight from mere correlation, requiring us to prioritize the validity of our assumptions over the sheer volume of our data.